Since we promised to publish a metadata framework as part of our Biml Workshop we’ve had quite a few requests for it, but first let me give some context on its evolution. I started on this framework about two years ago as a part time project to build a black box fully hosted cloud solution. After all, we are allowed to dream.

Being a BI professional, my UI skills were limited and Master Data Services seemed to be the best solution to capture the metadata. Putting the MDS “quirks” aside it worked well for prototyping my concepts and may entirely work for you. Before you go off and customize the solution and invest loads of money building a full blown metadata framework, I would like to point out that we have done this and it’s not for the faint hearted. Now that I have dangled the red rag for the bullish few, I have to point out that we are actively working with the Varigence team convert our framework and web services to be consumed in future Mist releases.

Configuration

Back to the actual framework we need to configure a couple of things.

1) Download a Mist trial from Varigence. The code will work if you are using BidsHelper, however this post references Mist features which will help visualize the in-memory objects created by the metadata.

2) Download and install AdventureWorks2012.

3) Create a couple of databases that ties into the solution (AW_EXT, AW_STG, AW_DWH). You can edit and use AW_CreateDatabases.sql file.

4) Create the extract views in AdventureWorks2012 using AW_CreateViews.sql.

5) Install and configure Master Data Services. If you are using MSSQL 2012 use the provided Biml.pkg file and deploy the package using code from the command prompt (you may need adapt it for your environment).

a. cd "C:\Program Files\Microsoft SQL Server\110\Master Data Services\Configuration"

b. MDSModelDeploy deployclone -package "c:\Biml\Biml.pkg"

If you are using a different version of MDS you can use the excel file (biml_mds.xlsx) to manually create the model and stage the data.

Overview of MDS Data Model

Configurations

The Configurations entity is used to define global settings that describe the different stages of your data warehouse environment. For simplicity we define an Extract/Load, Staging and Data Warehouse connection. You can extend this to hold additional information like table prefixes and add different stages like archive and data marts. I use this more as a grouping of objects similar to a folder for each schema in SSDT.

Connections

Need I say more? You could add a place to add a provider if you want.

SourceObjects

Define the header information for the SourceObjects. In the later non MDS version I combined SourceObjects and TargetObjects and added extra attributes, some for enhanced documentation and others to extend the BimlScripts.

SourceColumns

Define the column metadata for the SourceObjects. Think of extending this with additional columns to store simple SQL type cast or SSIS derived column formulas. Example SQL would be CONVERT(CHAR(8), [[this]], 112) AS [[this]] and SSIS (DT_I4)(REPLACE((DT_WSTR, 50) (DT_DBDATE) [[this]], "-", "")), replacing [[this]] with the relevant column name in the BimlScript.

TargetObjects

Define the header information for the TargetObjects. I use a pattern to infer my Extract/Load tables based on a transformation pattern from the source objects. I’m sure this is where most of you will want to start doing things your way so feel free to go crazy. Similarly I use the target objects to define my staging objects effectively allowing me to only define source and target without having to capture and maintain metadata for every stage of my ETL. I have extended this with attributes to identify Late Arriving dimensions, partition and compression, all attributes available in Biml.

TargetColumns

Define the column metadata for the TargetObjects. Think of extending this with additional attributes to track SCD and possibly use sequence numbers for your type 2 dimensions.

TargetReferenceColumns

Define table references/foreign keys/lookup columns. This is very things get interesting and you will have to think how objects are going to reference each other. Defining the links is the easy part, figuring out how you convert the metadata in useable Biml is where you will spend most of your time. I approached this by writing the code to work for the current project scenario and expand it as new scenarios arise.

TargetColumnMappings

Define the source to target column mappings. Simply put this is the glue that brings everything together and combined with all other metadata and Biml templates will build most of your data warehouse for you. When I hit build on my data warehouse projects and it goes boom, my first port of call is looking at my mappings (copy and paste error normally).

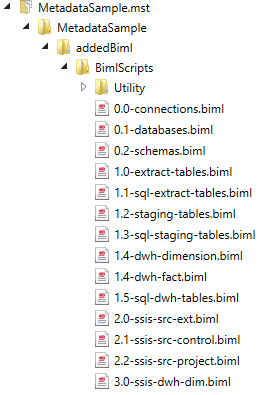

MetadataSample Biml Scripts

Open the MetadataSample Mist project and switch to the Project View. You should now see the following Biml files.

0.0-connections.biml

This is a live BimlScript that simply queries the metadata repository and create Connections.

0.1-databases.biml

This is a live BimlScript that simply queries the metadata repository and create Databases.

0.2-schemas.biml

This is a live BimlScript that simply queries the metadata repository and create Schemas.

1.0-extract-tables.biml

Another live BimlScript that queries the SourceObjects metadata and create the tables. This is where things start getting a little more interesting. Firstly Biml use the System.DbType throughout with a couple of minor exceptions not relevant at the moment. So I created a utility BimlScript that will convert the SourceColumns data types to DbTypes and include it in the file.

<#@ include file="Utility\i-GetSqlToBimlDatatype.biml" #>

Using includes is one way to centralize reusable code. I also configure a couple annotation tags that will referenced later in the projects. In the workshop I added the GetHashColumn later to demonstrate that using Biml you can incrementally build your framework. If you are using SQL as a source you can use the <#@ include file="Utility\i-GetHashColumn.biml" #> to prepare columns for the HASHBYTES function used later on in the project.

1.1-sql-extract-tables.biml

This live BimlScript iterate through the tables create by 1.0-extract-tables.biml and generate the DROP/CREATE SQL scripts that will create all the extract tables.

1.2-staging-tables.biml, 1.4-dwh-dimension.biml, 1.4-dwh-fact.biml

Variations based on 1.0-extract-tables.biml

1.3-sql-staging-tables.biml, 1.5-sql-dwh-tables.biml

Variations based on 1.1-sql-extract-tables.biml

2.0-ssis-src-ext.biml

This Biml file reads the Source metadata and create a very simple package for each Source Object consisting of an OleDbSource, DataConversion and OleDbDestination. Hopefully you can now see the power of centralized reusable scripts like <#@ include file="Utility\i-GetBimlDatatype.biml" #>. If you are developing Biml using Mist you can extend this pattern considerably using Transformers. As an example you could create a Transformer that will add a “Select” RowCount to a OleDbSource components and a “Insert” RowCount to all OleDbDestination components that conform the pattern where Name.StartsWith(“OLE_DST – Insert”) .

2.1-ssis-src-control.biml

Now that you have all the extract packages you want to create a Master or Control package. This Biml file iterate through all packages where the PackageSubpath="EX_AW_EXT" and create package execute tasks.

2.2-ssis-src-project.biml

By default Mist will create a project file for you if you have configured the Mist project for Project Deployment. I prefer to control what is added to the project as project connections and parameters. If you look at the file you will see about 10 lines of code commented out (my code). As I was showing my code to Scott feeling very proud of my achievement at this point he pointed out, very diplomatically that I can replace all of that with this line of code <# foreach(var connection in RootNode.SymbolTable[typeof(AstOleDbConnectionNode)]){#> that you see further down in the file. I discovered the wonders of the SymbolTable and thought to myself, it’s nice to have the creator of Biml helping me with code review.

3.0-ssis-dwh-dim.biml

This is where it all comes together and where you will spend most of your time figuring out what your Biml templates will do. I have deliberately left this file fairly vanilla for a couple of reasons. Firstly as I mentioned we have to build our templates, and now it’s your turn. Secondly I recognize that my implementation of best practices is not the only way and prefer not to get into the “Why do you do it this way” discussions. Things to note is that I reference an external sql file as the source query and use the Tags I created in the 2.0-ssis-src-ext.biml. The rest of it should be self-explanatory. Suggestions here is that you will need to create a SCD, Type 1, Type 2, Reference Lookup, Surrogate Key Lookup and Inferred Member pattern as a start.

Hopefully this gives you a starting point for your own framework and Biml templates.

Comments

Marcin

10:58am 10.01.14

Hu Peter, Really interesting project, thank you for sharing.

However, in SSDT I got an "Object reference not set to an instance of a object" error during TableExtensions.GetColumnList call.

What I'm issing here?

Sara

4:19pm 04.23.20

I really liked Biml but I cannot find anywhere how to configure and get working an in the Helper. I see here you have templates for Mist but not an example of how to do an scd type 2 (that is my interest), or 1.

Is that possible to add a code example?